Today’s ADAS stacks typically combine cameras, radars, and ultrasonic sensors with thermal sensing or lidar used in certain applications. Each modality has strengths and limitations. Cameras provide rich visual detail but struggle in low light. Radars perform well in poor weather but offer less precise classification. Thermal sensing can detect heat signatures beyond headlight range but must be interpreted alongside other data.

Adding sensors can improve coverage, but it also increases system cost, complexity, and data volume. At a certain point, the challenge shifts from sensing more to understanding more.

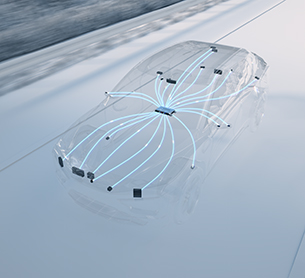

That is why the focus is moving toward tighter integration across sensing technologies rather than treating each sensor as an independent subsystem. When data from multiple modalities is combined intelligently, the system can maintain confidence even when one input is degraded.

This shift toward earlier and deeper sensor fusion is closely tied to broader changes in vehicle architecture. Centralized compute platforms and higher-bandwidth networks make it possible to process richer data streams and evaluate them together, instead of passing simplified object lists between separate modules. Modern AI- / Machine Learning-based perception and drive policies allow for a more accurate, universal and intelligent interpretation of the driving environment.

The result is a more complete and more intelligent picture of the vehicle environment and traffic scenario — and a better chance of handling the corner cases that still cause disengagements.