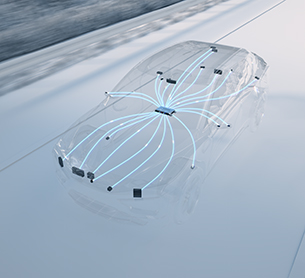

For ADAS to approach that level of reliability, its perception stack needs to run on a more centralized, unified architecture that can support AI‑driven fusion instead of isolated sensor pipelines.

The Limits of Fragmented Sensing

Early ADAS were typically structured around distinct sensing domains. Cameras, radars, and lidars each operated within largely independent processing pipelines, often supported by separate compute units and software stacks.

This architecture was effective for first-generation features. But as performance expectations increased — particularly for real-time object classification and intervention — fragmentation became a constraint.

When sensors operate in silos, they lack the ability to dynamically compensate for one another. If one sensing modality is degraded by environmental conditions, the system’s overall awareness can suffer. In advanced safety applications, that limitation is significant.

More importantly, fragmented architectures introduce complexity. Multiple ECUs, duplicated processing functions, and disconnected software layers can create increased costs, scalability, and integration challenges — especially as OEMs seek to deploy ADAS features across diverse vehicle platforms and global markets.

The next step forward required a different approach.

From Isolated Inputs to Unified Perception

Modern sensor fusion represents that shift.

At its core, sensor fusion is not simply about redundancy. It is about interpreting data from multiple sensing modalities within a shared perception framework. Instead of keeping each sensing pipeline fully separate, modern fusion combines information either after each sensor has been processed (late fusion) or earlier at the data level (early fusion), depending on the architecture.

Consider automatic emergency braking. A camera-based system may struggle to classify objects accurately in heavy glare or fog. Radar, however, performs more reliably in many adverse conditions. When camera and radar data are fused within a centralized perception stack, the system can maintain stronger object detection capability even when one modality is partially degraded.

The result is not just a backup. It is a more resilient and context-aware interpretation of the environment.

This mirrors human perception. Awareness is enhanced because multiple inputs are continuously integrated — not because any single input is flawless.

Architecture as the Enabler

As ADAS capabilities expand toward more sophisticated L2 and L2+ systems, fusion becomes increasingly architectural.

Centralized processing allows perception algorithms to run within a unified software environment rather than across multiple isolated compute domains. This reduces duplication, streamlines integration, and enables more efficient use of hardware resources.

For OEMs, the implications are practical:

- Greater scalability across vehicle segments

- More flexible feature deployment by region and trim

- Reduced system complexity and cost

- A clearer pathway for future software updates and enhancements — supporting the shift toward software-defined vehicles (SDVs)

In other words, fusion is not only a performance upgrade — it is a foundation for scalable deployment.

Just as humans do not require multiple independent “brains” to interpret different sensory inputs, next-generation ADAS benefit from centralized compute architectures that integrate sensing and decision-making into a cohesive whole.

Teaching Machines to See – Smarter

ADAS remains a rapidly evolving field. Environmental variability, regulatory requirements, and cost constraints continue to shape its trajectory. But one principle has become increasingly clear: improving perception is less about adding sensors and more about integrating them intelligently.

Teaching machines to see is not about sharper vision alone. It is about designing systems that interpret diverse inputs together, preserve awareness under real-world conditions, and scale efficiently across platforms.

Sensor fusion, grounded in a systems-level architecture, moves ADAS closer to that goal — delivering perception that is more resilient, more coherent, and better aligned with the demands of modern mobility.

The future of ADAS will not be defined by the strength of any single sensor. It will be defined by how seamlessly sensing, software, and compute units operate as one.